An artificial intelligence (AI) algorithm developed based on concepts commonly employed in economics and meteorology can yield a high level of accuracy for identifying COVID-19 on patients' chest radiographs, according to research from the University of Hong Kong (HKU). It also significantly outperformed radiologists.

The study, which is currently being reviewed by Nature Research, shares the university's experience with developing "nowcast" deep-learning models to help in the fight against COVID-19. Their second-generation model was more accurate overall than the radiologists in the study, with particularly strong results in patients with COVID-19 who were asymptomatic or who had early symptoms.

"While prospective validation will be needed, it is promising that our models were able to perform well in actual prospectively collected COVID-19 screening cohorts," the authors wrote. "Thus, our algorithm, together with the widespread availability of [chest x-ray], could lend itself as a potentially fast, sensitive, and [cost-effective] triaging tool, reducing the burden of already severely stretched healthcare systems."

Nowcasting

Outbreaks due to emergent pathogens like COVID-19 are difficult to contain, as the time to gather sufficient information needed to develop and then scale up an optimal detection system is often outpaced by the speed of transmission, according to senior author Dr. Michael Kuo, director of HKU's Medical AI Lab Program.

Although traditional deep-learning methods are powerful and could play a potential role in shortening this window of vulnerability, they require large amounts of training data that are often critically absent in the early phases of an epidemic. Waiting until sufficiently large amounts of cases accrue in the community -- and then collecting, curating, annotating, and using the data to train a deep-learning model -- loses valuable time and leads to falling further behind the curve of containment, he said.

To help with this challenge, Kuo and colleagues propose a different paradigm of traditional medical deep-learning model development based on the concept of "nowcasting" -- a common approach in economics and meteorology that involves making real-time assessments based on current up-to-date knowledge.

"By efficiently leveraging emerging and evolving COVID-19 knowledge, data, and models at hand, we show that it is possible to successively and iteratively increase performance while keeping apace and even ahead of current practice standards and knowledge," he told AuntMinnie.com. "We propose that nowcast [deep-learning] models could play an important role in constraining ground-zero events where speed of development and deployment of defensive countermeasures are crucial, yet initial information is sparse."

Initial efforts

The group initially developed a deep-learning model for general pneumonia detection (MAIL1.0) and then tested it on 455 cases with negative and 59 positive COVID-19 results on reverse transcription polymerase chain reaction (RT-PCR) testing. As of March 2, those COVID-19 patients represented 58% of all confirmed cases in Hong Kong.

The researchers then compared the model's performance with interpretations of two board-certified radiologists with more than 10 years of experience. The radiologists independently evaluated the chest x-rays, with disagreements resolved by a third radiologist.

Although MAIL1.0 produced strong results for finding visible pneumonia, it was only 54.2% sensitive for identifying COVID-19 patients. As more COVID-19 cases were accumulated, the researchers were able to develop an updated model -- MAIL2.0 -- designed specifically to predict COVID-19 status.

They then validated MAIL2.0 on an independent and prospectively collected study cohort of 248 patients. Of these, 176 had negative RT-PCR tests and 72 were positive. The model's performance was again compared with the consensus results from the radiologists.

| Performance for detecting COVID-19 on chest radiographs | ||

| Consensus radiologist interpretations | MAIL2.0 AI model | |

| Area under the curve | 0.47 | 0.81 |

| Sensitivity | 31.9% | 84.7% |

| Specificity | 62.5% | 71.6% |

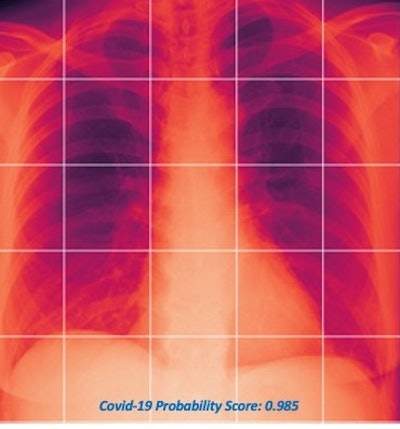

The MAIL2.0 AI model provides a probability score of 0 (low probability of COVID-19) to 1 (high probability) after analyzing chest radiographs. In this radiograph of a COVID-19 case, the model's probability score of 0.985 indicated a high probability of having the disease. Image courtesy of Dr. Michael Kuo.

The MAIL2.0 AI model provides a probability score of 0 (low probability of COVID-19) to 1 (high probability) after analyzing chest radiographs. In this radiograph of a COVID-19 case, the model's probability score of 0.985 indicated a high probability of having the disease. Image courtesy of Dr. Michael Kuo.The model significantly outperformed the radiologists (p < 0.001). Delving further into the results, the researchers found that the MAIL2.0 model correctly identified 40 (81.6%) of the 49 COVID-19-positive cases that were missed by the radiologists. On the other hand, the radiologists spotted two (18.2%) of the 11 COVID-19-positive cases that the model had missed.

Other key findings:

- MAIL2.0 correctly identified 40 (80%) of the 50 COVID-19 patients who had fever or respiratory symptoms, compared with 16 (32%) detected by the radiologists. The difference was statistically significant (p < 0.001).

- MAIL2.0 accurately detected eight (88.9%) of the nine asymptomatic COVID-19 patients, while the radiologists only detected two (22.2%). The difference was also statistically significant (p = 0.02).

- MAIL 2.0 pointed out 27 (81.8%) of the COVID-19 patients with early symptoms, compared with 10 (30.3%) found by the radiologists. The difference was statistically significant (p < 0.001).

Kuo said that they are now working on next-generation models that have shown continued improvement in performance.

"We are now working with international sites, deploying the software and models, expanding use, and doing further testing and validation to ensure broad usability," he said.

The group is also actively recruiting interested sites to participate in the project, Kuo said.