A new class of AI appears to move the technology closer to what radiologists are capable of when they interpret chest x-rays, according to an article published October 3 in Radiology.

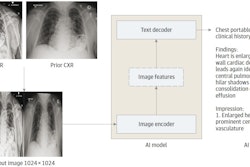

A group led by doctoral student and lead author Firas Khader, of University Hospital Aachen in Aachen, Germany, built a so-called "transformer-based neural network" that showed it is capable of condensing multiple inputs rather than imaging findings alone to make decisions.

"Models that are capable of combining both imaging and nonimaging data as inputs are needed to truly support physician decision-making," the team wrote.

Transformer-based neural networks were initially developed for the computer processing of human language and have since fueled large language models like ChatGPT and Google’s AI chat service, Bard.

Unlike convolutional neural networks, which are tuned to process imaging data, transformer models form a more general type of neural network, the authors explained. Essentially, they rely on what is called an attention mechanism, which allows the network to learn about relationships in its input.

"This capability is ideal for medicine, where multiple variables like patient data and imaging findings are often integrated into the diagnosis," Khader said, in a release from RSNA.

To build the model, the group used a public dataset comprised of chest x-ray images and nonimaging data from 53,150 ICU patients admitted between 2008 and 2019 at the Beth Israel Deaconess Medical Center in Boston, MA.

The researchers extracted a subset of 45,676 x-rays that showed the presence and severity of pleural effusion, atelectasis, pulmonary opacities, pulmonary congestion, and cardiomegaly. This input was matched and combined with 15 clinical parameters that included each patient's systolic, diastolic, and mean blood pressure, respiratory rate, body temperature, height, weight, and blood oxygen levels, for instance.

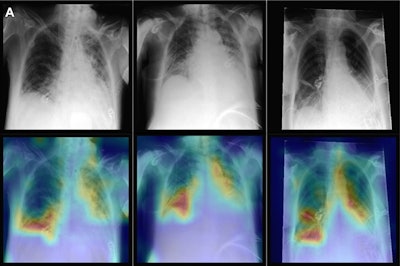

Representative radiographs (top), acquired in anteroposterior projection in the supine position, and corresponding attention maps (bottom). (A) Images show main diagnostic findings of the internal data set in a 49-year-old male patient with congestion, pneumonic infiltrates, and effusion (left); a 64-year-old male patient with congestion, pneumonic infiltrates, and effusion (middle); and a 69-year-old female patient with effusion (right). Image courtesy of Radiology.

Representative radiographs (top), acquired in anteroposterior projection in the supine position, and corresponding attention maps (bottom). (A) Images show main diagnostic findings of the internal data set in a 49-year-old male patient with congestion, pneumonic infiltrates, and effusion (left); a 64-year-old male patient with congestion, pneumonic infiltrates, and effusion (middle); and a 69-year-old female patient with effusion (right). Image courtesy of Radiology.

The group then evaluated how the model performed using parameters based on the combined metrics (multimodal) versus imaging or nonimaging data alone (unimodal). The team tested it using an in-house dataset from 45,016 ICU patients admitted at their hospital in Aachen from 2009 to 2020.

Consistently, the model's area under the operating curve (AUC) for detecting all pathologic conditions was higher when both imaging and nonimaging data were employed versus when either imaging or nonimaging data alone were used, according to the findings.

Specifically, the mean AUC was 0.84 when chest x-rays and clinical parameters were used, compared with 0.83 when only chest x-rays were used and 0.67 when only clinical parameters were used.

"This study has demonstrated that a transformer model trained on large-scale imaging and nonimaging data outperformed models trained on unimodal data," the researchers wrote.

Future studies should investigate other imaging scenarios than ICUs to reliably confirm the generalizability of such models, the researchers suggested. And they noted that large datasets that include different modalities from x-ray to MRI, anatomies from head to toe, and various conditions are expected to become publicly available for these studies soon.

"This will constitute an ideal application and testing ground for the transformer models presented in this study," Khader and colleagues concluded.