Wednesday, November 30 | 12:15 p.m.-12:45 p.m. | LL-QSE3034-WEA | Lakeside Learning Center

This storyboard presentation from MD Anderson Cancer Center at the University of Texas makes a strong argument for integrating a mandatory peer-review quality-assurance (QA) process into radiologists' exam interpretation workflow.The radiology department collects peer-review observations, eliminates potential selection bias, and achieves its target goal of peer review of 5% of the reports generated by the department, as well as exceeds it on a regular basis. Radiologist Dr. Kevin McEnery will describe how an internally developed electronic system of peer review data collection is better than both a paper-based data collection system and an electronic one that is not totally integrated with the RIS/PACS.

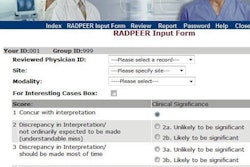

The data collection system is interfaced with RadPeer, the American College of Radiology's software program that allows peer review to be performed during the routine interpretation of current images. Five percent of unread studies identified by the department's RIS as having a comparison examination available are designated for mandatory QA review. The RIS does not permit finalization of the unread study until the QA evaluation is completed. If a radiologist disagrees with the interpretation, he or she uses the RadPeer scale and can enter text comments.

Radiologists are also encouraged to perform voluntary peer review and enter comments. During a three-month evaluation period starting in January 2011, 15,567 peer-review analyses were conducted. Of these, more than one-fourth (26.8%) were voluntarily submitted. Less than 2% of the total generated any disagreement with the reported findings, but 278 studies were referred for analysis to QA review.