An artificial intelligence (AI) algorithm can help radiologists better detect pneumothorax on chest radiography in patients after lung biopsies, according to a study published online January 25 in Radiology.

South Korean researchers tested a deep learning-based software application to see how well it assisted radiologists in identifying pneumothorax. The radiologists using the AI software outperformed radiologists who did not have access to the software, they found. Also, the use of AI was quicker and improved outcomes for patients in a real-world clinical setting, the authors wrote.

"We believe that the [AI] system can help improve the safety of patients receiving lung biopsy and, furthermore, may be used to promptly detect and timely manage pneumothorax of any cause," wrote principal investigator Dr. Chang Min Park, PhD, of Seoul National University.

Pneumothorax -- air around or outside the lung -- is the most common complication of percutaneous transthoracic needle biopsies (PTNBs), which are performed in patients with suspected lung tumors. Most cases of pneumothorax can be easily managed, yet in up to 15% of patients, clinical interventions such as the insertion of drainage tubes are needed to prevent respiratory failure. Chest x-rays are performed after biopsies to determine if pneumothorax has occurred.

"However, in clinical practice, with an excessive workload, prompt and accurate interpretation of radiographs is not always possible, resulting in a delayed diagnosis of pneumothorax," the authors wrote.

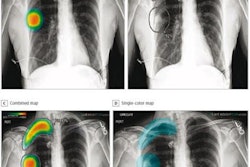

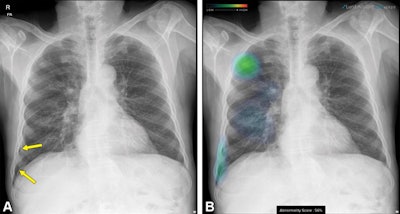

Follow-up chest x-ray in a 68-year-old man after percutaneous transthoracic needle biopsy for a nodule in the right upper lobe. (A) Original image shows a small pneumothorax developed in the right lower pleural space (arrows). (B) Additional image with an overlaid heat map from the AI algorithm highlights the location of the nodule and pneumothorax, which was in an unusual spot and could have been missed. Image courtesy of Radiology.

Follow-up chest x-ray in a 68-year-old man after percutaneous transthoracic needle biopsy for a nodule in the right upper lobe. (A) Original image shows a small pneumothorax developed in the right lower pleural space (arrows). (B) Additional image with an overlaid heat map from the AI algorithm highlights the location of the nodule and pneumothorax, which was in an unusual spot and could have been missed. Image courtesy of Radiology.Previous studies using x-ray datasets have shown the utility of AI algorithms for diagnosing pneumothorax on chest x-rays, with area under the curve (AUC) values ranging from 0.91 to 0.98, but the algorithms' performance in real-world clinical practice has not been established, according to the authors.

In this retrospective study, the Korean researchers evaluated an AI software application (Lunit Insight CXR Triage, Lunit) after implementing it at their hospital in February 2020. They compared results achieved by radiologists using AI with the retrospective performance of radiologists for detecting pneumothorax in patients who had undergone PTNBs.

The study included 676 x-rays (from 655 patients) interpreted by radiologists with help from the AI software and 676 x-rays (from 664 patients) previously interpreted by radiologists without AI assistance. During the study period, a total of 60 radiologists or trainees with one to 30 years of experience in chest radiography interpretation participated.

A thoracic radiologist with nine years of clinical experience evaluated the performance of the AI software on heat map or contour line-overlaid images. In addition, the researchers identified pneumothorax cases for which subsequent catheter drainage was required and analyzed the resulting time interval from chest radiography to drainage catheter insertion between the two methods.

The incidence of pneumothorax was 18.2% (123 of 676 x-rays) in the group interpreted with help of AI software and 22.5% (152 of 676 x-rays) in the group interpreted by radiologists without AI (p = 0.05).

| Use of AI software for detecting pneumothorax | |||||

| Radiologists without AI | Radiologists with AI | ||||

| Sensitivity | 67.1% | 85.4% | |||

| Negative predictive value | 91.3% | 96.8% | |||

| Accuracy | 92.3% | 96.8% | |||

In other findings, the sensitivity for a small amount of pneumothorax was higher in the AI group than the non-AI group (pneumothorax < 10%: 74.5% vs. 51.4%, p = 0.009; pneumothorax 10%-15%: 92.7% vs. 70.2%, p = 0.008).

In addition, use of the AI software changed patient outcomes, according to the authors. Fewer patients with pneumothorax required a subsequent drainage catheter when the AI software was used (2% vs. 5%; p = 0.009).

The mean time interval from follow-up chest radiography to drainage catheter insertion was 26.5 hours in the non-AI group and 25.2 hours in the AI group.

"The implementation of a deep learning-based [AI] system in clinical practice improved the sensitivity, negative predictive value, and accuracy of detecting pneumothorax on follow-up chest radiographs after percutaneous transthoracic needle biopsy," the researchers wrote.

Park and colleagues noted study limitations, namely the fact that the x-rays were read by 60 radiologists and trainees and that accuracy of interpretation varies among thoracic radiologists, fellowship trainees, and residents. Yet that may also reflect clinical settings, they stated.

"The most important strength of our study over previous studies dealing with similar topics was that we investigated whether this kind of [AI] system could enhance the diagnostic performance of radiologists in clinical practice," the authors concluded.