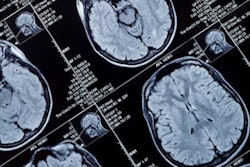

Using AI software with brain MR imaging improves the diagnostic accuracy for the monitoring of amyloid-related imaging abnormalities (ARIA) in patients undergoing beta amyloid-directed antibody therapies for Alzheimer's disease, researchers have found.

Using AI in this way could improve patient care, noted a team led by Diana Sima, PhD, of Icometrix in Leuven, Belgium. The group's findings were published February 12 in JAMA Network Open.

"Timely detection and accurate characterization and quantification of ARIA are essential to guide treatment decisions," the investigators wrote.

Patients undergoing beta amyloid-directed antibody therapies for Alzheimer's disease are at risk of amyloid-related imaging abnormalities, and require close monitoring, Sima and colleagues noted. But detecting ARIA and assessing its severity can be tricky.

"[Previous] studies demonstrate that radiologists with limited previous experience with ARIA may have difficulty identifying ARIA events in a large proportion of cases," they explained. "Hence, there may be an opportunity to increase accuracy of radiological reading via an assistive automated detection and diagnosis software tool, which, to our knowledge, currently is yet to be developed and validated."

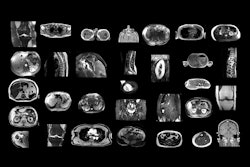

To this end, the team conducted a study to test an AI tool that would support the detection of ARIAs. The research included 16 radiologists who read brain MRI exams from 199 patients who participated in aducanumab clinical trials. The radiologists read the exams with and without assistance from the AI software (Icometrix's icobrain aria); of the 16 readers, mean years of experience was nine years, and two (12.5%) were specialized neuroradiologists.

Among the patients, most (52.8%) were women and most (78.9%) were white. Patients also underwent a predosing baseline and a postdosing follow-up MRI. The research's two primary end points were the difference in diagnostic accuracy between assisted and unassisted detection of what the team dubbed ARIA-E (edema and/or sulcal effusion) and ARIA-H (microhemorrhage and/or superficial siderosis), both of which were evaluated using the area under the receiver operating characteristic curve (AUC).

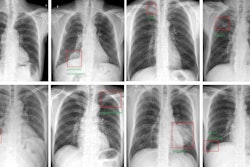

The software improved radiologists' detection of ARIA when it came to AUC values and sensitivity, the team found.

| Radiologist interpretation performance of identifying amyloid-related imaging abnormalities on brain MRI, with and without AI assistance | ||

|---|---|---|

| Measure | Unassisted interpretation | Assisted interpretation |

| AUC | ||

| ARIA-E detection | 0.82 | 0.87 |

| ARIA-H detection | 0.78 | 0.83 |

| Sensitivity | ||

| ARIA-E detection | 71% | 87% |

| ARIA-H detection | 69% | 79% |

| Specificity | ||

| ARIA-E detection | 92% | 83% |

| ARIA-H detection | 83% | 80% |

"[Our] study found that radiological reading performance for ARIA detection and diagnosis was significantly better when using the AI-based assistive software," the authors concluded. "[The] software has the potential to be a clinically important tool to improve safety monitoring and management of patients with AD treated with [beta-amyloid]-directed monoclonal antibody therapies."

The complete study can be found here.