Research into the effectiveness of diagnostic imaging tests may be affected by small patient populations, a phenomenon that could affect the accuracy of the findings, suggests a new study published August 25 in JAMA Network Open.

Diagnostic imaging research is rife with studies that have smaller sample sizes, which could result in the exaggeration of larger, more favorable effects than might be found in studies with larger patient populations. Researchers led by Dr. Lucy Lu from Flinders University in Bedford Park wrote that evidence of this occurrence -- also known as the small-study effect -- can be found in their analysis of nearly 670 studies and over 80,000 patients.

"These findings have significant implications on the conduct and interpretation of meta-analyses in the diagnostic imaging literature, as they suggest that diagnostic accuracy estimates presented by many meta-analyses may be gross overestimates," Lu et al wrote.

The idea behind small-study effects is that studies with smaller sample sizes tend to report larger and more favorable effect estimates than studies with larger sample sizes. The most common reasons for this to happen include bias, heterogeneity, and pure coincidence.

Smaller studies are more prone to publication bias, in which manuscripts containing statistically significant or favorable results are more likely to be published. Outcome reporting bias, where selective reporting of only the more favorable outcomes occurs, is another contributor to small-study effects.

Small-study effects caused by bias can impact meta-analyses by increasing the estimated pooled effect sizes. Detecting such bias by retrieving unpublished data is usually not feasible, so statistical tools such as funnel plots, the Egger test, and the Deek test are used to detect these effects.

In diagnostic imaging, small-study effects are not well understood. Researchers pointed out that "most" studies of diagnostic accuracy do not have a predefined study hypothesis, while most randomized clinical trials of interventions test a prespecified hypothesis.

The Lu team wanted to find out the presence and magnitude of small-study effects in diagnostic imaging accuracy meta-analyses. They looked at data from 31 meta-analyses published between 2010 and 2019. The team included meta-analyses with 10 or more studies of medical imaging diagnostic accuracy, assessing a single imaging modality. A total of 80,206 patients were included in these meta-analyses.

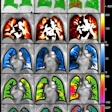

Researchers also used the natural logarithm of diagnostic odds ratio (ln[DOR]) in detecting publication bias. Diagnostic odds ratio shows the effectiveness of a diagnostic test. From there, they created a composite funnel plot by plotting the study effect size against precision of all primary studies.

The most-investigated imaging modalities were MRI (11 meta-analyses), ultrasound(8 meta-analyses), and CT (5 meta-analyses), with other modalities investigated in two or less meta-analyses.

Studying a composite funnel plot and using regression analysis, the researchers found that a "relative lack" of studies had higher study effects, with similar trends seen in individual imaging modalities. They also found a regression coefficient of ln(DOR) against study effects of ln(DOR) of 2.19 (p < 0.001), with CT as the reference modality.

The team also found an inverse association between effect size estimate and precision independent of imaging modality.

In other words, the larger the study, the more precise its effects were. The smaller the study, the more inflated its effects were in diagnostic imaging.

"Since studies with lower precision generally represent those with smaller sample size, these results are compatible with small study effects," Lu and colleagues wrote. "The observation was consistent across individual modalities."

The team also found that out of 26 meta-analyses formally assessing for publication bias using funnel plots and statistical tests, 21 found no evidence for such bias.

The authors called for further research to find out the various factors contributing to small-study effects. They wrote that these effects have implications for cost, imaging use, and patient outcomes by suboptimal choice of imaging test or unnecessary exposure to ionizing radiation.

"To mitigate some of these issues, we suggest that readers and authors be aware that commonly used tests for funnel plot asymmetry often do not have enough power in diagnostic imaging meta-analyses and thus may underestimate or fail to detect the presence of underlying bias," they added.