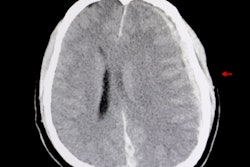

An artificial intelligence (AI) algorithm can detect all types of intracranial hemorrhage (ICH) on noncontrast head CT studies, showing potential for use in all clinical scenarios that involve detecting and tracking this critical condition, according to research presented at the recent RSNA 2018 meeting in Chicago.

Researchers from the Massachusetts Institute of Technology's Lincoln Laboratory and Boston University Medical Center trained and tested a deep residual convolutional neural network (DRCNN) to provide automated detection. The deep-learning algorithm, which can be operated at two different threshold settings depending on the need for high sensitivity or specificity, was found to offer value for the initial detection of ICH, as well as for monitoring hemorrhages on follow-up cases and postoperative studies.

Initial efforts to provide automated detection of ICH used conventional image-analysis methods such as thresholding, said presenter Dr. Chad Farris, PhD, of Boston University Medical Center. Although these techniques were shown to improve detection performance in less-experienced readers, they did not improve detection by experienced attending radiologists. More recently, deep learning has been applied with great success for automated detection of ICH, but it hasn't been applied yet to all clinical settings, including postoperative patients, he said.

As a result, the researchers sought to develop a DRCNN to provide automated detection of all ICH varieties and in all possible clinical scenarios, including initial head CT exams for diagnosis of ICH, follow-up head CT scans for known ICH, and postoperative head CT after surgical intervention. After searching their RIS for noncontrast head CT studies over a two-year period, they gathered 46 normal studies and 95 unique cases of ICH. Each hemorrhage was segmented into the five types of ICH: epidural, subdural, subarachnoid, intraparenchymal, and intraventricular.

The researchers trained the algorithm with 60 cases of ICH and validated it with five cases. The remaining 30 cases of ICH were set aside for testing; they included 56 hemorrhages on six initial scans, 17 follow-up scans, and seven postoperative scans. Using these testing cases along with the 46 normal head CT studies, they then tested the DRCNN at two threshold settings: a high threshold designed to achieve high specificity at a cost of low sensitivity, and a low threshold that provides high sensitivity at a cost of low specificity.

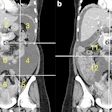

| Deep-learning algorithm performance by hemorrhage type | ||

| Type of hemorrhage | High-threshold setting of deep-learning algorithm | Low-threshold setting of deep-learning algorithm |

| Epidural | 0/1 (0%) | 1/1 (100%) |

| Subdural | 6/10 (60%) | 8/10 (80%) |

| Subarachnoid | 6/12 (50%) | 10/12 (83%) |

| Intraparenchymal | 17/21 (81%) | 19/21 (90%) |

| Intraventricular | 10/12 (83%) | 12/12 (100%) |

| Total | 39/56 (70%) | 50/56 (89%) |

The high-threshold setting had a 2% false-positive rate (one false-positive result), while the low-threshold setting had a 28% false-positive rate (13 false-positive results).

The researchers concluded that DRCNNs can be trained to successfully detect all types of ICH on noncontrast head CT exams in all clinical scenarios. Farris noted that radiologists could adjust the DRCNN threshold to alter its sensitivity and specificity for detecting ICH.

"This may be important for different cases; you may want to use a high threshold that is low sensitivity [but] high specificity for increasing the radiologist's confidence in a finding that a hemorrhage is present, if there's something that's equivocal," he said. "Or you might want to use a low threshold setting because you want high sensitivity but low specificity if you're screening a list of CTs for rapid evaluation by the radiologist. You may [also] potentially want to use more than one [threshold] for each study."