It is surprising that the New England Journal of Medicine allowed an author to publish an almost identical analysis to a paper previously published in 2012. The new article by Welch et al is little more than a rehash of the 2012 paper by Bleyer and Welch that falsely claimed massive overdiagnosis of breast cancer.

The current study uses a similarly faulty methodology to that earlier work in the analysis of the incidence of breast cancer and its relationship to mammography screening. In the 2012 paper, Welch "guessed" that the incidence of breast cancer, had screening not begun in the mid-1980s, would have increased by 0.25% per year.

Dr. Daniel Kopans from Massachusetts General Hospital.

Dr. Daniel Kopans from Massachusetts General Hospital.By extrapolating this "underlying" rate to 2008, the authors estimated what the incidence would have been in 2008 had there not been any screening. Since the actual incidence was much higher, they claimed that the difference between the actual and their "guesstimate" represented cancers that would have never been clinically apparent had there not been mammography screening.

They called these cases "overdiagnosed," suggesting that the cancers would have regressed or disappeared had they not been found by breast screening. They went on to claim that there were 70,000 of these "fake" cancers in 2008 alone, despite the fact that no one has ever seen a mammographically detected invasive breast cancer "disappear" on its own.

The NEJM published this denigration of mammography screening despite the fact that the authors had no data on which women had mammograms and which cancers were found by mammography. I have never seen a scientifically based paper in which an intervention (mammography) is blamed for an outcome when there are no data on the intervention relative to the outcome, yet the prestigious NEJM published the nonscience faulting mammography based on a "guess."

The fundamental problem with the 2012 paper was that the authors "guessed" that the underlying breast cancer incidence, had there been no screening, was defined by the period of 1976 to 1978. They ignored the fact that this was immediately after a period of ad hoc screening that had taken place that resulted from cancers being diagnosed in the wives of the president and vice president of the U.S. in 1974. This "burst" of screening was short-lived, but it caused the bump in the Surveillance, Epidemiology, and End Results (SEER) data seen in 1974 followed by a "compensatory" drop in incidence below baseline when screening stopped because cancers had been removed earlier.

This made the period of 1976 to 1978 the most unreliable period on which to "guess" at the baseline. In fact, 40 years of data from the highly respected Connecticut Tumor Registry (which predated the SEER program) showed that the incidence of invasive breast cancer had been increasing steadily by 1% per year since 1940 (four times what the authors had used in 2012 and consistent with the prescreening SEER period). Had they used the correct underlying rate, they would have found that the incidence of invasive breast cancer in 2008 was actually lower than what was expected by extrapolating. This makes sense since the removal of ductal carcinoma in situ (DCIS) lesions would be expected to reduce the future incidence of invasive cancers.

With this latest publication, Welch is back with his guessing at the underlying rate of invasive cancers had there not been any screening. But this time he even dismisses his previous "guess" and now claims that there was no underlying increase in incidence, ignoring the 0.25% rate he claimed in his first paper. The authors then extrapolated using this line as to what the incidence would have been in 2012 had there not been any screening. Since the actual incidence was considerably higher, they once again (falsely) claimed massive "overdiagnosis" of invasive breast cancers.

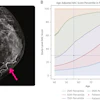

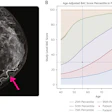

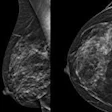

A better representation of the prescreening incidence, according to their own analysis (see figure 1 in their paper), is the period of 1979 to 1982. It is obvious that the underlying prescreening incidence before the start of prevalence screening was increasing by at least 1% per year, and not the flat line that the authors claim.

Not only does this new paper contradict the number used in the 2012 study, but both are incorrect. Once again, their conclusions would be exactly opposite had they used the correct baseline that showed that invasive breast cancer incidence was increasing steadily and consistently long before there was any screening by 1% per year. Once again, using an extrapolation based on 40 years of data in Connecticut, and confirmed by the SEER data from 1979 to 1982, instead of three of the most uncertain years in the SEER database, they would have found that there is no "overdiagnosis" of invasive cancers.

This most recent paper is also a rehash of another Welch paper published October 29, 2015, in the NEJM, titled "Trends in metastatic breast and prostate cancer." This paper, using the same faulty extrapolation, claimed that the rate of advanced breast cancers had only decreased by a small amount, and claimed that mammography had had little effect

In this most recent paper they make the same claim using the incidence of metastatic disease. Once again, had they used the correct underlying risk of invasive cancers, and since the rate of women with metastatic disease would be expected to go up at the same underlying rate (1% per year) had there been no screening, the fact that the rate has not increased and has actually decreased means, contrary to the authors' assertions, that there has been a major decline in women with metastatic disease associated with the major shift to the detection of smaller cancers by screening.

The authors have also clouded their analysis of the case fatality rates to claim that most of the decline in deaths is due to improvements in therapy. By grouping the data into size ranges they obscured the information. Deaths are decreased by changes in cancer size even within stages.

I suspect that if the data are presented by each size, with no grouping, the case fatality rate will be more closely linked to the actual size of the cancers with improvements for the smaller tumors within their ranges. Therapy has improved, but oncologists know that the most lives are saved by annual screening starting at the age of 40.

It is a major concern that the NEJM would publish three papers falsely disparaging breast cancer screening by allowing the same faulty assumptions to be used in all three analyses. Clearly peer review has failed at the NEJM.

What should not be missed is that if actual data are used, and not guesses, this paper confirms that the major decline in breast cancer deaths that has been seen since 1990 is predominantly due to earlier detection from screening.

Dr. Kopans is a professor of radiology at Harvard Medical School and a senior radiologist in the department of radiology, breast imaging division, at Massachusetts General Hospital.

The comments and observations expressed herein are those of the author and do not necessarily reflect the opinions of AuntMinnie.com.