An artificial intelligence (AI) method can generate virtual attenuation-corrected PET/CT images -- which cuts patients' radiation exposure, according to research presented at the Society of Nuclear Medicine and Molecular Imaging (SNMMI) meeting.

The findings are good news for cancer patients, who often must undergo many PET/CT exams throughout diagnosis and treatment, noted a team led by Kevin Ma, PhD, of the National Cancer Institute in Bethesda, MD.

"The CT portion of the exam contributes about half the radiation exposure yet is redundant to a large degree," Ma explained in a June 12 presentation. "Artificial intelligence methods could be used to generate virtual CT scans that provide the anatomic localization of PET activity but do not expose the patient to additional radiation."

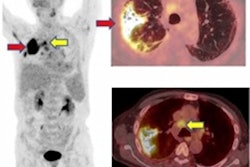

The investigators used 305 PET/CT studies performed with F-18 DCFPyL (Pylarify, Lantheus Medical Imaging) to develop the AI model. Each study included a non-attenuation-corrected PET exam, an attenuation-corrected PET exam, and a low-dose CT exam; the studies were categorized into a training set (185), a validation set (60), and a test set (60).

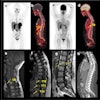

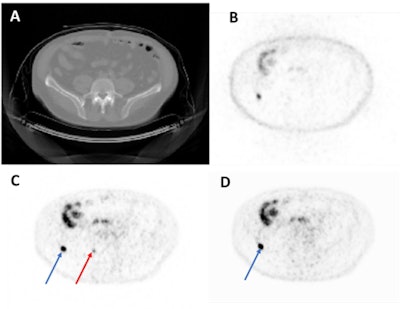

Representative axial image from a test set imaging study. From top left to bottom right: CT (A), NAC-PET (B), original AC-PET (C), AI-generated AC-PET (D). The blue arrow indicates one lesion that was observed in both images, and the red arrow indicates a lesion that was missed (i.e., not de-tected by expert nuclear medicine physicians) in AI image. NAC = Non-Attenuation Corrected. AC = Attenuation-Corrected. Courtesy of Kevin Ma, PhD, et al.

Representative axial image from a test set imaging study. From top left to bottom right: CT (A), NAC-PET (B), original AC-PET (C), AI-generated AC-PET (D). The blue arrow indicates one lesion that was observed in both images, and the red arrow indicates a lesion that was missed (i.e., not de-tected by expert nuclear medicine physicians) in AI image. NAC = Non-Attenuation Corrected. AC = Attenuation-Corrected. Courtesy of Kevin Ma, PhD, et al.The group generated synthetic attenuation-corrected PET scans from original non-attenuation-corrected PET exams. Two nuclear medicine radiologists reviewed 40 randomized PET/CT exams, blinded to whether the studies were attenuation-corrected or not, and assessed overall noise and image quality.

Reader 1 demonstrated 70% sensitivity with the virtual attenuation-corrected PET/CT images and reader 2 demonstrated 68% sensitivity; positive predictive value for reader 1 was 89% and for reader 2 it was 83%. Reader 1 had a 61% false-positive rate and reader 2 had a rate of 89% on the virtual PET/CT images. Each reader produced two false negatives.

The findings are promising, but there's more work to be done to refine the algorithm, according to Ma's team.

"We have developed a deep learning solution to create virtual [synthetic attenuation-corrected PET] images from [non-attenuation corrected PET]," it concluded. "Readers were able to successfully detect lesions on the AI generated PET images with reasonable sensitivity values ... [but] future work is needed to validate [synthetic attenuation-corrected PET] uptake values."