Within seconds, an artificial intelligence (AI) algorithm can assess chest x-rays for the presence of COVID-19, enabling rapid screening and triage of patients arriving at hospitals for reasons other than the virus, according to research published online November 24 in Radiology.

A team of researchers from Northwestern University led by senior author Aggelos Katsaggelos, PhD, and cardiologist Dr. Ramsey Wehbe trained their algorithm -- called DeepCOVID-XR -- on nearly 15,000 chest x-rays acquired earlier this year. In testing on an independent dataset, the algorithm performed comparably to the consensus interpretations by five experienced radiologists and was also 10 times faster.

"We feel that this algorithm has the potential to benefit healthcare systems in mitigating unnecessary exposure to the virus by serving as an automated tool to rapidly flag patients with suspicious chest imaging for isolation and further testing," the authors wrote.

DeepCOVID-XR is an ensemble of 24 individually trained deep convolutional neural networks (CNNs). First, the algorithm was pretrained using a dataset of over 100,000 chest x-ray images from the U.S. National Institutes of Health (NIH).

Next, DeepCOVID-XR was trained and validated on 14,788 images -- including 4,253 COVID-19-positive images -- gathered from 20 sites across the Northwestern Memorial Healthcare System between February and April 2020.

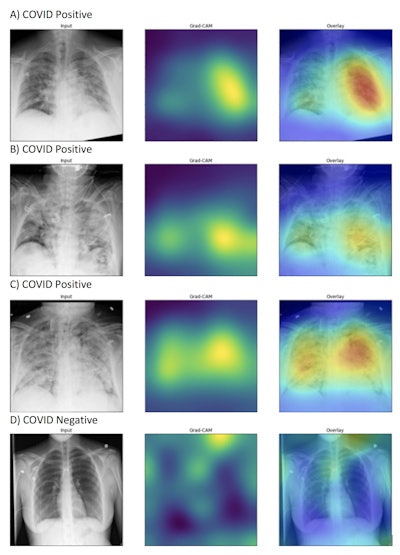

After analyzing the images, DeepCOVID-XR yields a final binary prediction of either positive or negative for COVID-19 based on the weighted average of the predictions by the individual CNNs that make up the ensemble. The algorithm also uses a gradient class activation mapping technique to provide "heat maps" on the images. This allows users to see the image features that were most important in arriving at the algorithm's predictions of a positive COVID-19 result.

DeepCOVID-XR's generated heat maps correctly highlighted abnormalities in the lung fields in those images accurately labeled as COVID-19 positive (A-C) in contrast to images that were accurately labeled as negative for COVID-19 (D). Intensity of colors on the heat map corresponds to features of the image that are important for prediction of COVID-19 positivity. Image and caption courtesy of Northwestern University.

DeepCOVID-XR's generated heat maps correctly highlighted abnormalities in the lung fields in those images accurately labeled as COVID-19 positive (A-C) in contrast to images that were accurately labeled as negative for COVID-19 (D). Intensity of colors on the heat map corresponds to features of the image that are important for prediction of COVID-19 positivity. Image and caption courtesy of Northwestern University.The researchers tested the algorithm on 2,214 chest x-rays -- including 1,192 positive for COVID-19 -- from a single community hospital that had not contributed to the training or validation datasets. In this test set, DeepCOVID-XR produced 82% accuracy and an area under the curve (AUC) of 0.88.

Next, the researchers compared the performance of the algorithm with five experienced radiologists on a set of 300 randomly selected images from the testing dataset. These readers included four board-certified thoracic radiologists with eight years, six years, six years, and one year of experience, respectively, as well as one diagnostic radiologist with 38 years of experience.

| DeepCOVID-XR performance on test set of 300 chest x-rays for detecting COVID-19 | |||

| Radiologists (individual) | Radiologists (consensus) | DeepCOVID-XR | |

| Accuracy | Range = 76%-81% | 81% | 82% |

| AUC | n/a | 0.85 | 0.88 |

The differences in accuracy and AUC between the radiologists and DeepCOVID-XR were not statistically significant.

Running on a single Nvidia Titan V graphics processing unit, DeepCOVID-XR analyzed the subset of 300 images in approximately 18 minutes, compared with about 2.5 to 3.5 hours for each of the expert radiologists.

In future work, the researchers plan to conduct a prospective evaluation of the algorithm. They also would like to incorporate other clinical data into the algorithm and adapt it to also predict risk for clinically meaningful outcomes in COVID-19 patients. The authors noted that they have made their algorithm publicly available for other researchers to use and train with new data.

"By providing the DeepCOVID-XR algorithm code base as an open-source resource, we hope investigators around the world will further improve, fine-tune, and test the algorithm using clinical images from their own institutions," they wrote.