The U.S. Food and Drug Administration (FDA) has announced an effort to develop a new regulatory framework for medical artificial intelligence (AI) algorithms that reflects the fact that these tools are continuously learning and evolving from experience gained in real-world clinical use.

In a statement on Tuesday, outgoing FDA Commissioner Dr. Scott Gottlieb revealed the first step in this process: a discussion paper that describes the agency's vision for how it might regulate these continuously learning algorithms throughout all phases of the product life cycle.

"We plan to apply our current authorities in new ways to keep up with the rapid pace of innovation and ensure the safety of these devices," he wrote.

To date, most AI technologies cleared by the agency have generally been "locked" algorithms that don't continuously adapt or learn every time they are used. Instead, these algorithms are modified by manufacturers at intervals that include training of the algorithm with new data, followed by manual verification and validation of the updated algorithms, according to Gottlieb.

Adaptive or continuously learning algorithms, however, don't require manual modification to incorporate updates. Instead, they can learn from new user data, he explained.

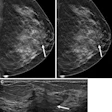

"For example, an algorithm that detects breast cancer lesions on mammograms could learn to improve the confidence with which it identifies lesions as cancerous or may learn to identify specific subtypes of breast cancer by continually learning from real-world use and feedback," he wrote.

In its first step for developing this new approach, the FDA has outlined the information it might require for premarket review of devices that include AI algorithms that make real-world modifications. This includes the algorithm's performance, manufacturer's plan for modifications, and the ability of the manufacturer to manage and control the risks of the modifications, according to Gottlieb.

Furthermore, the agency signaled its intention to review a software's predetermined change control plan, which would provide detailed information about the types of anticipated modifications based on the algorithm's retraining and update strategy, as well as the associated methodology used to implement those changes in a controlled manner that manages risk to patients, Gottlieb said.

"Consistent with our existing quality systems regulation, the agency expects software developers to have an established quality system that is geared towards developing, delivering, and maintaining high-quality products throughout the life cycle that conforms to the agency's standards and regulations," he wrote.

Gottlieb said the goal of the framework is to assure that ongoing algorithm changes follow prespecified performance objectives and change control plans; use a validation process that ensures improvements to the performance, safety, and effectiveness of the artificial intelligence software; and, once on the market, include real-world monitoring of performance to ensure that device safety and effectiveness are maintained.

After receiving feedback on its discussion paper, the FDA will then issue a draft guidance.

"While I know there are more steps to take in our regulation of artificial intelligence algorithms, the first step taken today will help promote ideas on the development of safe, beneficial, and innovative medical products," Gottlieb wrote.