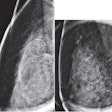

The researchers began with more than 136,000 mammographic images and more than 755,000 DICOM files. Filtering to include only screening and diagnostic studies and standard, nonmagnification views resulted in more than 127,000 images corresponding to some 660,000 DICOM files.

"We exploit this robust dataset to train neural networks aimed at detecting cancer on both screening and diagnostic mammograms," said Dr. Hari Trivedi, a fellow in the department of radiology and biomedical imaging at the University of California, San Francisco. "The implications of this technique are broad, as the process can be replicated at multiple institutions and across various radiologic subspecialties.

A regular expression technique with manual validation was used to assign final pathologic diagnoses to a pilot subset of DICOM files based on positive pathology results within one year. This process was combined with BI-RADS 1, 2, and 6 cases to create approximately 4,700 cases labeled as cancer and some 355,000 cases labeled as noncancer.

"We view these algorithms as a tool to aid rather than replace radiologists by potentially improving accuracy, efficiency, and confidence in breast cancer detection," Trivedi said. "Ultimately, a well-trained network with sufficient data may be able to distinguish between invasive malignancies requiring excision and noninvasive malignancies which may be safe to monitor."

The model achieved an area under the receiver operating characteristic curve (AUC) of 0.83 for predictions on an internal test set of 200 cancer and 200 noncancer images. When tested on an external validation set of 2,000 images that reflected a more clinically accurate prevalence of cancer to noncancer, the model achieved an AUC of 0.96.

"Our technique decreases both the time and cost associated with the generation of very large datasets," Trivedi told AuntMinnie.com. "We hope that this can be used to accelerate the pace of current research in deep learning in radiology. This has the potential to impact patients by decreasing unnecessary recalls, biopsies, and surgeries."