A number of virtual colonoscopy computer-aided detection (CAD) studies in recent years have shown promising results for the detection of colorectal polyps and cancer. But usually left unanswered is the question of whether CAD results actually improve reader performance, which is, ultimately, the only question that matters when examining patients in the real world.

Such indirect measurements of CAD performance can't predict whether a radiologist using CAD will find more patients who are positive for clinically significant polyps, whether the radiologist will dismiss true-positive CAD findings, or whether overall performance is improved once the human being joins the machine, for example.

So a group of radiologists in the U.K. decided to look deeper, hoping to assess how VC CAD can affect patient classification as positive or negative, polyp detection, and reading times.

They found that VC CAD improved reader performance -- significantly and fairly evenly -- at all levels. Roughly speaking, the more inexperienced readers were brought up to mediocre status, and better readers became excellent.

The research was led by Dr. Steve Halligan from University College London and University College Hospitals NHS Trust. He presented the results last month at the European Congress of Radiology (ECR) in Vienna.

The study relied on CT data gleaned from 346 patients at seven centers in the U.S. and Europe. All patients had undergone prone and supine virtual colonoscopy imaging on four- or eight-detector CT scanners (maximum collimation of 2.5 mm) following cathartic bowel preparation.

The FDA-approved VC CAD system (Colon CAR 1.2, Medicsight, London) was trained on data from 239 patients (comprising 494 colonoscopy-proven polyps) and tested in a separate set of 107 patients.

The data were reviewed by 10 accredited abdominal radiologists, who were familiarized with the software before beginning the program and identified polyps using a 100-point confidence scale.

"They were the equivalent of board-certified to read abdominal CT, but had no specific expertise in (VC)," Halligan explained in an e-mail to AuntMinnie.com. "This group of radiologists is often the target audience for CAD, because of the belief that CAD will compensate for training and/or experience."

After a two-month delay, the data were read again, this time with CAD, and reported once again by patient and by polyp on a 100-point confidence scale. In the CAD-assisted second read, the annotated and unannotated CT data were displayed simultaneously, Halligan said.

In accordance with the study aims, the results were reported in absolute terms, as well as by their effect on readers.

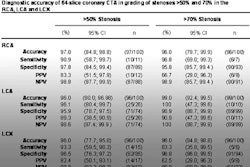

In per-patient results, at least one polyp was detected by CAD in 45 (75%) patients with polyps. CAD found 13 of 14 (93%) patients with polyps 1 cm or larger, and 37 of 40 (93%) patients with a polyp 6 mm or larger, Halligan said.

By polyp, 76 of 142 (54%) polyps were detected, including 17 of 19 (90%) lesions 1 cm and larger, and 49 of 62 (79%) lesions 6 mm and larger.

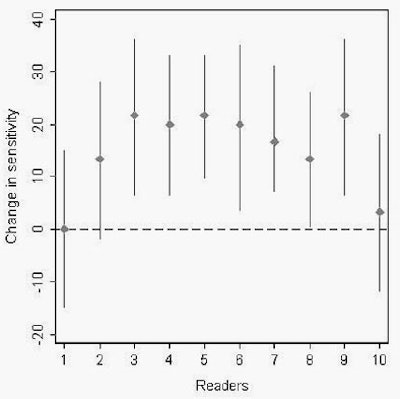

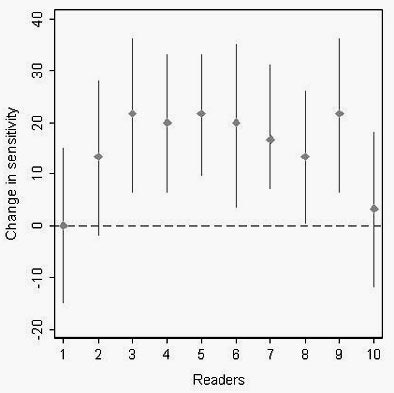

Per-patient detection by readers with CAD was relatively poor. Overall, 68% (95% CI 58% to 77%) patients with polyps were more likely to be identified by readers using CAD. Specifically, 41 patients were more likely to be identified with CAD, eight were less likely to be identified, and in 11 patients there was no change in likelihood, Halligan said.

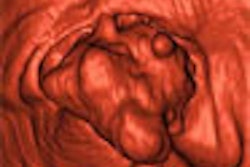

|

| Among 65 subjects with polyps, 68% (on a per-patient basis) were more likely to be identified using CAD. Image courtesy of Dr. Steve Halligan. |

In per-patient sensitivity and specificity, the sensitivity for lesion detection increased significantly in 70% of the readers, yet specificity decreased in 10% of the readers. On a per-polyp basis, 12 (95% CI 7-16) more polyps were detected with CAD by each reader overall, including five (95% CI 3-7) medium-sized polyps and seven (95% CI 4-9) small polyps.

The group concluded that CAD increased reader per-patient and per-polyp sensitivities significantly, but had less impact on specificity.

CAD increased performance "by about the 12% level, and … increased sensitivity for all polyp sizes," Halligan said in his presentation. "Specificity was increased but not significantly."

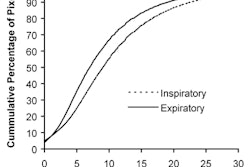

The software did cut interpretation time significantly, from a mean of 12.4 minutes to 10.5 minutes in patients with polyps, and from a mean of 9.7 minutes to 6.8 minutes in patients without polyps.

"CAD undoubtedly does speed things up, so there may be economic benefits I suppose," Halligan said, drawing a number of other conclusions from the study.

"CAD increases sensitivity more than it decreases specificity," he wrote. "However, even with CAD, the overall detection was poor -- and this is undoubtedly a reflection on the readers. Better readers would have performed better, so the bottom line is that CAD cannot substitute for good training. (CAD's) role, in my opinion, is as an adjunct (i.e., safety net) for experienced, well-trained readers."

In a later study that has not yet been presented, Halligan said a subset of readers received formal training in virtual colonoscopy interpretation, then had their performance reassessed with CAD.

"They got much better," he said of the trained group. Moreover, the degree of improvement with CAD was about the same as for the untrained readers, "or maybe a little more, with decreased reading times but a higher baseline," he said. "So (all) of the performance characteristics were shifted up a line. We can't publish that until the present study is published, though." (The present study was submitted for publication last week.)

In response to a question from the audience in Vienna, Halligan said the results had confirmed the group's hypothesis that CAD cannot replace the skills acquired in VC training.

"There's no substitute for training, which is the bottom line," he said. "You can't give this to a monkey and expect (it) to do shedloads of private practice."

By Eric Barnes

AuntMinnie.com staff writer

April 7, 2006

Related Reading

Colon CAD helps expert readers, March 16, 2006

AuntMinnieTV: NIH researchers add CAD to virtual colonoscopy, February 3, 2006

VC CAD found equivalent to colonoscopy in screening population, December 21, 2005

New VC reading schemes could solve old problems, October 13, 2005

Colon CAD: VC's extra eyes face new challenges, August 5, 2005

Copyright © 2006 AuntMinnie.com