An artificial intelligence (AI) algorithm may be able to assist in classifying prostate lesions on 3D prostate-specific membrane antigen (PSMA)-PET exams, according to research presented at the annual meeting of the Society of Nuclear Medicine and Molecular Imaging (SNMMI).

Researchers from Johns Hopkins University in Baltimore have trained a deep 3D convolutional neural network (CNN) that can analyze prostate lesions and predict their categorization under the PSMA Reporting and Data System (PSMA-RADS) with a promising level of accuracy.

"The proposed method ... yielded [area under the curve] values ranging from 0.82 up to 0.98 across all PSMA-RADS categories on the test set, indicating accurate performance on the classification task," said presenter and first author Kevin Leung, PhD.

Reliable classification of prostate lesions from 3D F-18 DCFPyL PSMA PET images is an important clinical need for the diagnosis and prognosis of prostate cancer, Leung said. In recent years, PSMA-RADS was developed to enable classification of lesions on PSMA-targeted PET scans into categories that reflect the likelihood of prostate cancer:

- PSMA-RADS-1A: Benign lesions without radiotracer uptake

- PSMA-RADS-1B: Benign lesions with uptake

- PSMA-RADS-2: Low uptake in bone or soft-tissue sites atypical of prostate cancer

- PSMA-RADS-3A: Equivocal uptake in soft-tissue lesions typical of prostate cancer

- PSMA-RADS-3B: Equivocal uptake in bone lesions not clearly benign

- PSMA-RADS-3C: Lesions atypical for prostate cancer, but have high uptake

- PSMA-RADS-3D: Lesions concerning for prostate cancer, but lack uptake

- PSMA-RADS-4: Lesions with high uptake typical for prostate cancer, but lack anatomic abnormality

- PSMA-RADS-5: Lesions with high uptake and anatomic findings indicative of prostate cancer

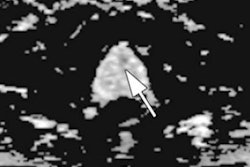

In their study, the Johns Hopkins team sought to develop an automated deep learning-based method for lesion classification of these PET images. After gathering 267 DCFPyL PSMA PET images from 267 prostate cancer patients, four nuclear medicine physicians manually segmented each of the lesions, which were then assigned to one of the nine PSMA-RADS categories.

The dataset included 3,724 prostate cancer lesions, for an average of 14 lesions per patient. Of these 3,724 lesions, 70% were used for training and 15% were utilized for validation. The remaining 15% were set aside for testing of the final model.

The 3D PET images were cropped to yield cubic volumes of interest (VOIs) around the center of each image as input to the network. The CNN was trained to output a predicted PSMA-RADS score using the VOI of the lesion and the corresponding manual segmentations as inputs. The researchers then evaluated the algorithm on the test set.

| Performance of algorithm for classifying prostate lesions by PSMA-RADS category | |

| Area under the curve | |

| PSMA-RADS-1A | 0.93 |

| PSMA-RADS-1B | 0.95 |

| PSMA-RADS-2 | 0.89 |

| PSMA-RADS-3A | 0.93 |

| PSMA-RADS-3B | 0.82 |

| PSMA-RADS-3C | 0.98 |

| PSMA-RADS-3D | 0.96 |

| PSMA-RADS-4 | 0.88 |

| PSMA-RADS-5 | 0.90 |

Overall accuracy was 67.4%.

"A deep learning-based approach for lesion classification in PSMA PET images was developed and showed significant promise towards automated classification of prostate cancer lesions," Leung said.