Top Story

Latest News

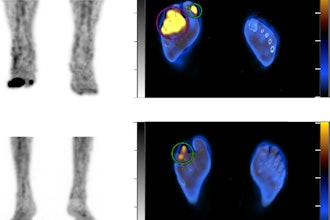

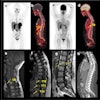

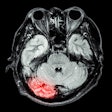

Cases of the Week

Check out our Cases of the Week!

More from AuntMinnie

Next Generation Radiology Workflow Tools

April 24, 2024

Voiant and Thirona enter commercial partnership

April 24, 2024

Spectral AI names CEO of Spectral IP, plans spin-off

April 24, 2024