Although the experience provided by an iPad for reviewing medical images still has room for improvement, the popular tablet can be employed for remote reading of emergency CT studies by radiologists on the move, according to research presented at the RSNA 2011 meeting in Chicago.

A study team from the Imperial College Healthcare National Health Service (NHS) Trust in the U.K. found that radiologists reading emergency CT cases on an iPad did not have statistically significantly higher overall error rates compared with radiologists reading on PACS workstations. On the downside, a high error rate was found in CT pulmonary angiography (CTPA) studies, and the mean score that radiologists gave of their experience with the iPad ranged between average and good.

"Overall iPad error rates are comparable to a workstation in both major and minor errors," said presenter Dr. Daniel Fascia. "CTPA error rates are not acceptable at 27% [for major errors], but confidence is lower reading CTPA and CT abdomen [studies], indicating that radiologists appreciate these limitations."

Modern emergency medicine is often guided by CT, generating increased demand and expectations on radiology services. This adds to an increasing workload for radiologists, Fascia said.

The ability to have mobile radiologists would allow faster access to an expert opinion 24 hours a day, support the notion of treatment decisions guided by imaging findings, and improve supervision of trainees, Fascia said. As a result, the research team sought to test its hypothesis that touchscreen tablet computers are suitable for mobile reading of emergency CT cases, and that radiologists using them have error rates comparable to readers using PACS workstations.

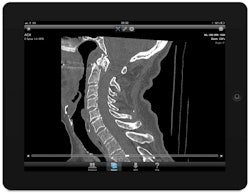

Image courtesy of Dr. Daniel Fascia.

Image courtesy of Dr. Daniel Fascia.In a double-blinded study format, 10 attending-grade radiologists were asked to read 10 emergency CT studies (including both body and neuro cases) on an iPad (version 1) running OsiriX HD 2.0.2 software. They were then asked to read the cases again on their traditional workstations.

The iPad has a 9.7-inch screen that provides resolution of 1024 x 768, and the device's luminance was measured at 373 cd/m2, Fascia said. It employs secure networking methods such as Advanced Encryption Standard (AES) encryption and virtual private network (VPN) connections.

After rendering their interpretation for each case on the iPad in bullet-point format, the radiologists were asked if they would be confident enough to issue a provisional or a formal final report based on their user experience. They were also asked to give studies a score of either 5 (excellent), 4 (good), 3 (average), 2 (poor), or 1 (awful) on individual characteristics such as screen resolution, scrolling-through-stack capability, windowing, ease of use, and overall experience.

The iPad interpretations were then compared to the reports that were produced on the radiologists' PACS workstation. Each study was also reported by two radiologists to serve as the gold standard for the study. Errors between the devices were categorized as either major or minor based on the Royal College of Radiologists (RCR) discrepancy method, Fascia said.

Error rates, iPads versus PACS workstations

|

The researchers determined that while the iPad error rates were higher than with standard workstations, the difference was not statistically significant for either major or minor errors (both p < 0.05).

The researchers then applied Cohen's kappa test to assess interdevice agreement between the iPad and the workstation. For major errors, the devices had a kappa coefficient of 0.68 (95% confidence interval [CI]: 0.42-0.94), which demonstrated very good concordance. In minor errors, however, the devices only showed moderate concordance with a kappa coefficient of 0.59 (95% CI: 0.43-0.76).

iPad results by CT study type

|

"With a major error rate of 27%, the iPad is clearly not ready for viewing CTPAs," he said. "However, if you look at the reporting confidence, it was only 20% in the formal reporting group, which indicates that people realize the limitations and are not prepared to issue reports."

A similarly low formal reporting confidence was seen in CT abdominal studies, despite the lack of major errors, Fascia said. A low rate of major and minor errors and high levels of reporting confidence were seen in head CT, CT KUB, and CT C-spine studies, he said.

After each study, the researchers also asked radiologists to review their experience with the iPad based on five criteria. Mean qualitative scores for those criteria were as follows:

- Screen resolution: 4.1

- Scrolling through stack: 3.6

- Windowing: 3.7

- Ease of use: 3.8

- Overall experience: 3.8

"All fell in a tight band somewhere between average and good, indicating that we still have somewhere to go with the user experience on reporting with the iPad," Fascia said.

Fascia noted that the iPad represents the present, not the future, for tablet computers.

"The future is probably a platform-agnostic solution, perhaps with standards-compliant HTML5 DICOM viewers and, crucially, with no plug-ins and no dependencies," he said. "So you could look at DICOM [images] on anything from your TV to your wristwatch."